LNet Router Config Guide

This document provides procedures to configure and tune an LNet router. It will also cover detailed instructions set on setting up connectivity of an Infiniband network to Intel OPA nodes using LNet router.

LNet

LNet supports different network types like Ethernet, InfiniBand, Intel Omni-Path and other proprietary network technologies such as the Cray’s Gemini. It routes LNet messages between different LNet networks using LNet routing. LNet’s routing capabilities provide an efficient protocol to enable bridging between different types of networks. LNet is part of the Linux kernel space and allows for full RDMA throughput and zero copy communications when supported by underlying network. Lustre can initiate a multi-OST read or write using a single Remote Procedure Call (RPC), which allows the client to access data using RDMA at near peak bandwidth rates. With Multi-Rail (MR) feature implemented in Lustre 2.10.X, it allows for multiple interfaces of same type on a node to be grouped together under the same LNet (ex tcp0, o2ib0, etc.). These interfaces can then be used simultaneously to carry LNet traffic. MR also has the ability to utilize multiple interfaces configured on different networks. For example, OPA and MLX interfaces can be grouped under their respective LNet and then can be utilized with MR feature to carry LNet traffic simultaneously.

LNet Configuration Example

An LNet router is a specialized Lustre client where Lustre file system is not mounted and only the LNet is running. A single LNet router can serve different file systems.

For the above example:

- Servers are on LAN1, a Mellanox based InfiniBand network – 10.10.0.0/24

- Clients are LAN2, an Intel OPA network – 10.20.0.0/24

- Routers on LAN1 and LAN2 at 10.10.0.20, 10.10.0.21 and 10.20.0.29, 10.20.0.30 respectively

The network configuration on the nodes can be done either by adding the module parameters in lustre.conf /etc/modprobe.d/lustre.conf or dynamically by using the lnetctl command utility. Also, current configuration can be exported to a YAML format file and then the configuration can be set by importing that YAML file anytime needed.

Network Configuration by adding module parameters in lustre.conf

Servers: options lnet networks="o2ib1(ib0)" routes="o2ib2 10.10.0.20@o2ib1" Routers: options lnet networks="o2ib1(ib0),o2ib2(ib1)" "forwarding=enabled" Clients: options lnet networks="o2ib2(ib0)" routes="o2ib1 10.20.0.29@o2ib2"

NOTE: Restarting LNet is necessary to apply the new configuration. To do this, it is needed to unconfigure the LNet network and reconfigure again. Make sure that the Lustre network and Lustre file system are stopped prior to unloading the modules.

// To unload and load LNet module modprobe -r lnet modprobe lnet // To unconfigure and reconfigure LNet lnetctl lnet unconfigure lnetctl lnet configure

Dynamic Network Configuration using lnetctl command

Servers: lnetctl net add --net o2ib1 --if ib0 lnetctl route add --net o2ib2 --gateway 10.10.0.20@o2ib1 lnetctl peer add --nid 10.10.0.20@o2ib1 Routers: lnetctl net add --net o2ib1 --if ib0 lnetctl net add --net o2ib2 --if ib1 lnetctl peer add --nid 10.10.0.1@o2ib1 lnetctl peer add --nid 10.20.0.1@o2ib2 lnetctl set routing 1 Clients: lnetctl net add --net o2ib2 --if ib0 lnetctl route add --net o2ib1 --gateway 10.20.0.29@o2ib2 lnetctl peer add --nid 10.20.0.29@o2ib2

Importing/Exporting configuration using a YAML file format

// To export the current configuration to a YAML file lnetctl export FILE.yaml lnetctl export > FILE.yaml // To import the configuration from a YAML file lnetctl import FILE.yaml lnetctl import < FILE.yaml

There is a default lnet.conf file installed at /etc/lnet.conf which has an example configuration in YAML format. Another example of a configuration in a YAML file is:

net:

- net type: o2ib1

local NI(s):

- nid: 10.10.0.1@o2ib1

status: up

interfaces:

0: ib0

tunables:

peer_timeout: 180

peer_credits: 8

peer_buffer_credits: 0

credits: 256

lnd tunables:

peercredits_hiw: 64

map_on_demand: 32

concurrent_sends: 256

fmr_pool_size: 2048

fmr_flush_trigger: 512

fmr_cache: 1

tcp bonding: 0

dev cpt: -1

CPT: "[0]"

route:

- net: o2ib2

gateway: 10.10.0.20@o2ib1

hop: 1

priority: 0

state: up

peer:

- primary nid: 10.10.0.20@o2ib1

Multi-Rail: False

peer ni:

- nid: 10.10.0.20@o2ib1

state: up

max_ni_tx_credits: 8

available_tx_credits: 8

min_tx_credits: 7

tx_q_num_of_buf: 0

available_rtr_credits: 8

min_rtr_credits: 8

refcount: 4

global:

numa_range: 0

max_intf: 200

discovery: 1

LNet provides a mechanism to monitor each route entry. LNet pings each gateway identified in the route entry on regular, configurable interval (live_router_check_interval) to ensure that it is alive. If sending over a specific route fails or if the router pinger determines that the gateway is down, then the route is marked as down and is not used. It is subsequently pinged on regular, configurable intervals (dead_router_check_interval) to determine when it becomes alive again.

Multi-Rail LNet Configuration Example

If the routers are MR enabled, we can add the routers as peers with multiple interfaces to the clients and the servers, the MR algorithm will ensure that both interfaces of the routers are used while sending traffic to the router. However, single interface failure will still cause the entire router to go down. With the network topology example in Figure 1 above, LNet MR can be configured like below:

Servers: lnetctl net add --net o2ib1 --if ib0,ib1 lnetctl route add --net o2ib2 --gateway 10.10.0.20@o2ib1 lnetctl peer add --nid 10.10.0.20@o2ib1,10.10.0.21@o2ib1 Routers: lnetctl net add --net o2ib1 --if ib0,ib1 lnetctl net add --net o2ib2 --if ib2,ib3 lnetctl peer add --nid 10.10.0.1@o2ib1,10.10.0.2@o2ib1 lnetctl peer add --nid 10.20.0.1@o2ib2,10.20.0.2@o2ib2 lnetctl set routing 1 Clients: lnetctl net add --net o2ib2 --if ib0,ib1 lnetctl route add --net o2ib1 --gateway 10.20.0.29@o2ib2 lnetctl peer add --nid 10.20.0.29@o2ib2,10.20.0.30@o2ib2

Fine-Grained Routing

The routes parameter, by identifying LNet routers in a Lustre configuration, is used to tell a node which route to use when forwarding traffic. It specifies a semi-colon-separated list of router definitions.

routes=dest_lnet [hop] [priority] router_NID@src_lnet; \ dest_lnet [hop] [priority] router_NID@src_lnet

An alternative syntax consists of a colon-separated list of router definitions:

routes=dest_lnet: [hop] [priority] router_NID@src_lnet \ [hop] [priority] router_NID@src_lnet

When there are two or more LNet routers, it is possible to give weighted priorities to each router using the priority parameter. Here are some possible reasons for using this parameter:

- One of the routers is more capable than the other.

- One router is a primary router and the other is a back-up.

- One router is for one section of clients and the other is for another section.

Each router is moving traffic to a different physical location. The priority parameter is optional and need not be specified if no priority exists. The hop parameter specifies the number of hops to the destination. When a node forwards traffic, the route with the least number of hops is used. If multiple routes to the same destination network have the same number of hops, the traffic is distributed between these routes in a round-robin fashion. To reach/transmit to the LNet dest_lnet, the next hop for a given node is the LNet router with the NID router_NID in the LNet src_lnet. Given a sufficiently well-architected system, it is possible to map the flow to and from every client or server. This type of routing has also been called fine-grained routing.

Advanced Routing Parameters

In a Lustre configuration where different types of LNet networks are connected by routers, several kernel module parameters can be set to monitor and improve routing performance. These parameters are set in /etc/modprobe.d/lustre.conf file. The routing related parameters are:

- auto_down - Enable/disable (1/0) the automatic marking of router state as up or down. The default value is 1. To disable router marking, set:

options lnet auto_down=0

- avoid_asym_router_failure - Specifies that if even one interface of a router is down for some reason, the entire router is marked as down. This is important because if nodes are not aware that the interface on one side is down, they will still keep pushing data to the other side presuming that the router is healthy, when it really is not. To turn it on:

options lnet avoid_asym_router_failure=1

- live_router_check_interval - Specifies a time interval in seconds after which the router checker will ping the live routers. The default value is 60. To set the value to 50, use:

options lnet live_router_check_interval=50

- dead_router_check_interval - Specifies a time interval in seconds after which the router checker will check the dead routers. The default value is 60. To set the value to 50:

options lnet dead_router_check_interval=50

- router_ping_timeout - Specifies a timeout for the router checker when it checks live or dead routers. The router checker sends a ping message to each dead or live router once every dead_router_check_interval or live_router_check_interval respectively. The default value is 50. To set the value to 60:

options lnet router_ping_timeout=60

- check_routers_before_use - Specifies that routers are to be checked before use. Set to off by default. If this parameter is set to on, the dead_router_check_interval parameter must be given a positive integer value.

options lnet check_routers_before_use=1

The router_checker obtains the following information from each router:

- time the router was disabled

- elapsed disable time

If the router_checker does not get a reply message from the router within router_ping_timeout seconds, it considers the router to be down. If a router that is marked “up” responds to a ping, the timeout is reset. If 100 packets have been sent successfully through a router, the sent-packets counter for that router will have a value of 100. The statistics data of an LNet router can be found from /proc/sys/lnet/stats. If no interval is specified, then statistics are sampled and printed only one time. Otherwise, statistics are sampled and printed at the specified interval (in seconds). These statistics can be displayed using lnetctl utility as well like below:

# lnetctl stats show

statistics:

msgs_alloc: 0

msgs_max: 2

errors: 0

send_count: 887

recv_count: 887

route_count: 0

drop_count: 0

send_length: 656

recv_length: 70048

route_length: 0

drop_length: 0

The ping response also provides the status of the NIDs of the node being pinged. In this way, the pinging node knows whether to keep using this node as a next-hop or not. If one of the NIDs of the router is down and the avoid_asym_router_failure is set, then that router is no longer used.

LNet Dynamic Configuration

LNet can be configured dynamically using the lnetctl utility. The lnetctl utility can be used to initialize LNet without bringing up any network interfaces. This gives flexibility to the user to add interfaces after LNet has been loaded. In general the lnetctl format is as follows: lnetctl cmd subcmd [options] The following configuration items are managed by the tool:

- Configuring/Unconfiguring LNet

- Adding/Removing/Showing Networks

- Adding/Removing/Showing Peers

- Adding/Removing/Showing Routes

- Enabling/Disabling routing

- Configuring Router Buffer Pools

Configuring/Unconfiguring LNet

After LNet has been loaded via modprobe (modprobe lnet), the lnetctl utility can be used to configure LNet without bringing up networks that are specified in the module parameters. It can also be used to configure network interfaces specified in the module parameters by providing the --all option.

// To configure LNet lnetctl lnet configure [--all] // To unconfigure LNet lnectl lnet unconfigure

Adding/Removing/Showing Networks

Now LNet is ready to be configured with networks to be added. To add an o2ib1 LNet network on ib0 and ib1 interfaces:

lnetctl net add --net o2ib1 --if ib0,ib1

Using the show subcommands, it is possible to review the configuration:

lnetctl net show -v

net:

- net type: lo

local NI(s):

- nid: 0@lo

status: up

statistics:

send_count: 0

recv_count: 0

drop_count: 0

tunables:

peer_timeout: 0

peer_credits: 0

peer_buffer_credits: 0

credits: 0

lnd tunables:

tcp bonding: 0

dev cpt: 0

CPT: "[0]"

- net type: o2ib1

local NI(s):

- nid: 192.168.5.151@o2ib1

status: up

interfaces:

0: ib0

statistics:

send_count: 0

recv_count: 0

drop_count: 0

tunables:

peer_timeout: 180

peer_credits: 8

peer_buffer_credits: 0

credits: 256

lnd tunables:

peercredits_hiw: 64

map_on_demand: 32

concurrent_sends: 256

fmr_pool_size: 2048

fmr_flush_trigger: 512

fmr_cache: 1

tcp bonding: 0

dev cpt: -1

CPT: "[0]"

- nid: 192.168.5.152@o2ib1

status: up

interfaces:

0: ib1

statistics:

send_count: 0

recv_count: 0

drop_count: 0

tunables:

peer_timeout: 180

peer_credits: 8

peer_buffer_credits: 0

credits: 256

lnd tunables:

peercredits_hiw: 64

map_on_demand: 32

concurrent_sends: 256

fmr_pool_size: 2048

fmr_flush_trigger: 512

fmr_cache: 1

tcp bonding: 0

dev cpt: -1

CPT: "[0]"

To delete a net:

lnetctl net del --net o2ib1 --if ib0

Resulatnt configuration would be like:

lnetctl net show -v

net:

- net type: lo

local NI(s):

- nid: 0@lo

status: up

statistics:

send_count: 0

recv_count: 0

drop_count: 0

tunables:

peer_timeout: 0

peer_credits: 0

peer_buffer_credits: 0

credits: 0

lnd tunables:

tcp bonding: 0

dev cpt: 0

CPT: "[0]"

- net type: o2ib1

local NI(s):

- nid: 192.168.5.151@o2ib1

status: up

interfaces:

0: ib0

statistics:

send_count: 0

recv_count: 0

drop_count: 0

tunables:

peer_timeout: 180

peer_credits: 8

peer_buffer_credits: 0

credits: 256

lnd tunables:

peercredits_hiw: 64

map_on_demand: 32

concurrent_sends: 256

fmr_pool_size: 2048

fmr_flush_trigger: 512

fmr_cache: 1

tcp bonding: 0

dev cpt: -1

CPT: "[0]"

Adding/Removing/Showing Peers

lnetctl command to add remote peers:

lnetctl peer add --prim_nid 192.168.5.161@o2ib1 --nid 192.168.5.162@o2ib1

Verify the added peers configuration:

lnetctl peer show -v

peer:

- primary nid: 192.168.5.161@o2ib1

Multi-Rail: True

peer ni:

- nid: 192.168.5.161@o2ib1

state: NA

max_ni_tx_credits: 8

available_tx_credits: 8

min_tx_credits: 7

tx_q_num_of_buf: 0

available_rtr_credits: 8

min_rtr_credits: 8

refcount: 1

statistics:

send_count: 2

recv_count: 2

drop_count: 0

- nid: 192.168.5.162@o2ib1

state: NA

max_ni_tx_credits: 8

available_tx_credits: 8

min_tx_credits: 7

tx_q_num_of_buf: 0

available_rtr_credits: 8

min_rtr_credits: 8

refcount: 1

statistics:

send_count: 1

recv_count: 1

drop_count: 0

To delete a peer:

lnetctl peer del --prim_nid 192.168.5.161@o2ib1 --nid 192.168.5.162@o2ib1

Adding/Removing/Showing Routes

To add a routing path:

lnetctl route add --net o2ib2 --gateway 192.168.5.153@o2ib1 --hop 2 --prio 1

Verify the added route information:

lnetctl route show --verbose route: - net: o2ib2 gateway: 192.168.5.153@o2ib1 hop: 2 priority: 1 state: down

To make this configuration permanent it is necessary to create a YAML file under /etc/sysconfig/lnet.conf. We can then import/export the live configuration file:

lnetctl export > /etc/sysconfig/lnet.conf

The lnet script in /etc/init.d/ is compatible with DLC and should be enabled to be started at boot time:

systemctl enable lnet

Enabling/Disabling Routing

To enable/disable routing on a node:

// To enable routing lnetctl set routing 1 // To disable routing lnetctl set routing 0

To check the routing information:

lnetctl routing show

Configure Router Buffers

The router buffers are used to buffer messages which are being routed to other nodes. These buffers are shared by all CPU partitions, so their values should be decided by keeping in consideration the number of CPTs in the the system. The minimum value of these buffers per CPT are:

#define LNET_NRB_TINY_MIN 512 #define LNET_NRB_SMALL_MIN 4096 #define LNET_NRB_LARGE_MIN 256

A value greater than 0 for router buffers will set the number of buffers accordingly. A value of 0 will reset the router buffers to system defaults. To configure router buffer pools using lnetctl: // VALUE must be greater than or equal to 0 lnetctl set tiny_buffers 4096 lnetctl set small_buffers 8192 lnetctl set large_buffers 2048

ARP flux issue for MR node

Due to Linux routing quirks, if there are two network interfaces on the same node, the HW address returned in the ARP for a specific IP might not necessarily be the one for the exact interface being ARPed. This causes problems for o2iblnd, because it resolves the address using IPoIB, and gets the wrong Infiniband address. To get around this problem, it is required to setup routing entries and rules to tell the linux Kernel to respond with the correct HW address. Below is an example setup on a node with 2 Ethernet, 2 MLX and 2 OPA interfaces.

- OPA interfaces:

- ib0: 192.168.1.1

- ib1: 192.168.2.1

- MLX interfaces:

- ib2: 172.16.1.1

- ib3: 172.16.2.1

#Setting ARP so it doesn't broadcast sysctl -w net.ipv4.conf.all.rp_filter=0 sysctl -w net.ipv4.conf.ib0.arp_ignore=1 sysctl -w net.ipv4.conf.ib0.arp_filter=0 sysctl -w net.ipv4.conf.ib0.arp_announce=2 sysctl -w net.ipv4.conf.ib0.rp_filter=0 sysctl -w net.ipv4.conf.ib1.arp_ignore=1 sysctl -w net.ipv4.conf.ib1.arp_filter=0 sysctl -w net.ipv4.conf.ib1.arp_announce=2 sysctl -w net.ipv4.conf.ib1.rp_filter=0 sysctl -w net.ipv4.conf.ib2.arp_ignore=1 sysctl -w net.ipv4.conf.ib2.arp_filter=0 sysctl -w net.ipv4.conf.ib2.arp_announce=2 sysctl -w net.ipv4.conf.ib2.rp_filter=0 sysctl -w net.ipv4.conf.ib3.arp_ignore=1 sysctl -w net.ipv4.conf.ib3.arp_filter=0 sysctl -w net.ipv4.conf.ib3.arp_announce=2 sysctl -w net.ipv4.conf.ib3.rp_filter=0 ip neigh flush dev ib0 ip neigh flush dev ib1 ip neigh flush dev ib2 ip neigh flush dev ib3 echo 200 ib0 >> /etc/iproute2/rt_tables echo 201 ib1 >> /etc/iproute2/rt_tables echo 202 ib2 >> /etc/iproute2/rt_tables echo 203 ib3 >> /etc/iproute2/rt_tables ip route add 192.168.0.0/16 dev ib0 proto kernel scope link src 192.168.1.1 table ib0 ip route add 192.168.0.0/16 dev ib1 proto kernel scope link src 192.168.2.1 table ib1 ip rule add from 192.168.1.1 table ib0 ip rule add from 192.168.2.1 table ib1 ip route add 172.16.0.0/16 dev ib2 proto kernel scope link src 172.16.1.1 table ib2 ip route add 172.16.0.0/16 dev ib3 proto kernel scope link src 172.16.2.1 table ib3 ip rule add from 172.16.1.1 table ib2 ip rule add from 172.16.2.1 table ib3 ip route flush cache

LNet Tuning

LNet tuning is possible by passing parameters to the Lustre Network Driver (LND). The Lustre Network Driver for RDMA is the ko2iblnd kernel module. This driver is used both for Intel OPA cards and InfiniBand cards. With Lustre 2.10.X, network card can be detected and, using the /usr/sbin/ko2iblnd-probe, tunable parameters are set for supported cards. Intel OPA and Intel True Scale cards are automatically detected and configured by the script to achieve optimal performance with Lustre. The script can be modified to detect other network cards and set optimal parameters. The following is an example parameter settings in the configuration file (/etc/modprobe.d/ko2iblnd.conf) for a Lustre peer.

alias ko2iblnd-opa ko2iblnd options ko2iblnd-opa peer_credits=128 peer_credits_hiw=64 credits=1024 concurrent_sends=256 ntx=2048 map_on_demand=32 fmr_pool_size=2048 fmr_flush_trigger=512 fmr_cache=1

Parameters from /etc/modprobe.d/ko2iblnd.conf are global and cannot be different for different fabrics. It is thus recommended to use lnetctl command utility to specify it correctly for each fabric and then store the configuration into /etc/sysconfig/lnet.conf to make it persist across reboots. Previously all peers (compute nodes, LNet router, servers) on the network required identical tunable parameters for LNet to work independently from the hardware technology used (Intel OPA or InfiniBand). So, If you are routing into a fabric with older Lustre nodes, these parameters must be updated to apply identical options to the ko2iblnd module. LNet uses peer credits and network interface credits to send data through the network in the chunks of a fixed size of 1MB. The peer_credits tunable parameter manages the number of concurrent sends to a single peer and can be monitored using the /proc/sys/lnet/peers interface. The number for peer_credits can be increased using a module parameter for the specific Lustre Network Driver (LND):

//The default value is 8 ko2iblnd-opa peer_credits=128

It is not always mandatory to increase peer_credits to obtain good performance, because in very large installation, an increased value can overload the network and increase the memory utilization of the OFED stack. The tunable network interface credits (credits) limits the number of concurrent sends to a single network, and can be monitored using the proc/sys/lnet/nis interface. The number of network interface credits can be increased using a module parameter for the specific Lustre Network Driver (LND):

// The default value is 64 and is shared across all the CPU partitions (CPTs). ko2iblnd-opa credits=1024

Fast Memory Registration (FMR) is a technique to reduce memory allocation costs. In FMR, memory registration is divided in two phases:

- allocating resources needed by the registration and

- registering using resources obtained from the first step.

The resource allocation and de-allocation can be managed in batch mode, and as result, FMR can achieve a much faster memory registration. To enable FMR in LNet, the value for map_on_demand should be more than zero.

// The default value is 0 ko2iblnd-opa map_on_demand=32

Earlier, Fast Memory Registration was supported only by Intel OPA and Mellanox FDR cards based on the mlx4 driver, but not by Mellanox FDR/EDR cards based on the mlx5 driver. With Lustre 2.10.X release, Fast Reg memory registration support for MLX5 (v2.7.16) is added.

| Tunable | Suggested Value | Default Value |

|---|---|---|

| peer_credits | 128 | 8 |

| peer_credits_hiw | 64 | 0 |

| credits | 1024 | 64 |

| concurrent_sends | 256 | 0 |

| ntx | 2048 | 512 |

| map_on_demand | 32 | 0 |

| fmr_pool_size | 2048 | 512 |

| fmr_flush_trigger | 512 | 384 |

| fmr_cache | 1 | 1 |

From the above table:

- peer_credits_hiw sets high water mark to start to retrieve credits.

- concurrent_sends is the number of concurrent hardware sends to a single peer.

- ntx is the number of message descriptors allocated for each pool.

- fmr_pool_size is the size of the FMR pool on each CPT.

- fmr_flush_trigger is the number of dirty FMRs that triggers a pool flush.

- fmr_cache should be set to non-zero to enable FMR caching.

Configuring LNet Routers to Connect Intel OPA and InfiniBand nodes

The LNet router can be deployed using an industry standard server with enough network cards and the LNet software stack. Designing a complete solution for a production environment is not an easy task, but Intel is providing tools (LNet Self Test) to test and validate the configuration and performance in advance. The goal is to design LNet routers with enough bandwidth to satisfy the throughput requirements of the back-end storage. The number of compute nodes connected to an LNet router normally does not change the design of the solution. The bandwidth available to an LNet router is limited by the slowest network technology connected to the router. Typically, it is observed a 10-15% decline in bandwidth from the nominal hardware bandwidth of the slowest card, due to the LNet router. In every case, it is recommended to validate the implemented solution using tools provided by the network interface maker and/or the LNet Self Test utility, which is available with Lustre. LNet routers can be congested if the number of credits (peer_credits and credits) are not set properly. For communication to routers, not only a credit and peer credit must be tuned, but a global router buffer and peer router buffer credit are needed.

Memory Considerations

An LNet router uses additional credit accounting when it needs to forward a packet for another peer: •

- Peer Router Credit: This credit manages the number of concurrent receives from a single peer and prevent single peer from using all router buffer resources. By default this value should be 0. If this value is 0, LNet router uses peer_credits.

- Router Buffer Credit: This credit allows messages to be queued and select non data payload RPC versus data RPC to avoid congestion. In fact, an LNet Router has a limited number of buffers:

tiny_router_buffers – size of buffer for messages of <1 page size small_router_buffers – size of buffer for messages of 1 page in size large_router_buffers – size of buffer for messages >1 page in size (1MiB)

These LNet kernel module parameters can be monitored using the /proc/sys/lnet/buffers file and are available per CPT:

| Pages | Count | Credits | Min |

|---|---|---|---|

| 0 | 512 | 512 | 503 |

| 0 | 512 | 512 | 504 |

| 0 | 512 | 512 | 503 |

| 0 | 512 | 512 | 497 |

| 1 | 4096 | 4096 | 4055 |

| 1 | 4096 | 4096 | 4048 |

| 1 | 4096 | 4096 | 4056 |

| 1 | 4096 | 4096 | 4070 |

| 256 | 256 | 256 | 248 |

| 256 | 256 | 256 | 247 |

| 256 | 256 | 256 | 240 |

| 256 | 256 | 256 | 242 |

In the table above,

- Pages - It is the number of pages per buffer. This is a constant.

- Count - It is the number of allocated buffers. This is a constant.

- Credits - It is the number of buffer credits . This is a real-time value of the number of buffer credits currently available. If this value is negative, that indicates the number of queued messages.

- Min - It is the lowest number of credits ever reached in the system. This is historical data.

The memory utilization of the LNet router stack is caused by the Peer Router Credit and Router Buffer Credit parameters. An LNet router with a RAM size of 32GB or more has enough memory to sustain very large configurations for these parameters. In any case, the memory consumption of the LNet stack can be measured using the /proc/sys/lnet/lnet_memused metrics file.

Software Installations

Lustre Installation

Install Lustre client rpms on Lustre clients and LNet routers and Lustre server rpms on Lustre servers from the below link - https://downloads.hpdd.intel.com/public/lustre/latest-release/

Intel OPA software installations

- Intel Omni-Path IFS on Intel Omni-Path node - Download the IFS package from the below link and untar the package

rpm -ivh kernel-devel-3.10.0-514.6.1.el7.x86_64.rpm

yum -y install \

kernel-devel kernel-debuginfo-common kernel-debuginfo-common-x86_64 \

redhat-lsb redhat-lsb-core

yum -y install \

libibcommon libibverbs libibmad libibumad infinipath-psm \

libibmad-devel libibumad-devel infiniband-diags libuuid-devel \

atlas sysfsutils rpm-build redhat-rpm-config tcsh \

gcc-gfortran libstdc++-devel libhfi1 expect qperf perftest papi

tar xzvf IntelOPA-IFS.DISTRO.VERSION

cd IntelOPA-IFS.DISTRO.VERSION &&

./INSTALL -i opa_stack -i opa_stack_dev -i intel_hfi -i delta_ipoib \

-i fastfabric -i mvapich2_gcc_hfi -i mvapich2_intel_hfi \

-i openmpi_gcc_hfi -i openmpi_intel_hfi -i opafm -D opafm

- Intel Omni-Path Basic on the routers - Download the Intel Omni-Path Basic install package from the same (above) link.

cd IntelOPA-Basic.DISTRO.VERSION

./INSTALL -i opa_stack -i opa_stack_dev -i intel_hfi -i delta_ipoib \

-i fastfabric -i mvapich2_gcc_hfi -i mvapich2_intel_hfi \

-i openmpi_gcc_hfi -i openmpi_intel_hfi -i opafm -D opafm

After completing the Intel Omni-Path Basic build you should see the following Intel Omni-Path HFI1 modules loaded from the ouput of lsmod:

# lsmod |grep hfi hfi1 558596 5 ib_mad 61179 4 hfi1,ib_cm,ib_sa,ib_umad compat 13237 7 hfi1,rdma_cm,ib_cm,ib_sa,ib_mad,ib_umad,ib_ipoib ib_core 88311 11 hfi1,rdma_cm,ib_cm,ib_sa,iw_cm,ib_mad

Mellanox software installations

Download the tgz MOFED package from: http://www.mellanox.com/page/software_overview_ib and then run the following steps:

# tar -xvf MLNX_OFED_LINUX-DISTRO.VERSION

# cd MLNX_OFED_LINUX-DISTRO.VERSION/

# run ./mlnxofedinstall --add-kernel-support --skip-repo (Note: Before you install MLNX_OFED review the various of installation options)

// Rebuild lustre:

./configure --with-linux=/usr/src/kernels/3.10.0-514.10.2.el7_lustre.x86_64/ --with-o2ib=/usr/src/ofa_kernel/default

make rpms

Example Configuration

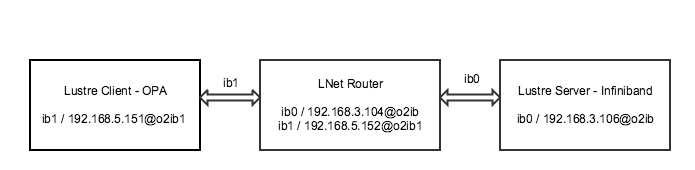

Below is the detailed instruction set to configure the Lustre Clients, Lustre Server and LNet Router per the network topology shown in the above figure. Before following the steps, make sure Lustre client is installed on Lustre clients and LNet routers and Lustre server on Lustre servers from here - https://downloads.hpdd.intel.com/public/lustre/latest-release/

Configure Lustre Clients

Run the following commands to configure Lustre client per the topology in the figure above:

#modprobe lnet

#lnetctl lnet configure

#lnetctl net add --net o2ib1 --if ib1

#lnetctl route add --net o2ib --gateway 192.168.5.152@o2ib1

#lnetctl net show --verbose

net:

- net type: lo

local NI(s):

- nid: 0@lo

status: up

statistics:

send_count: 0

recv_count: 0

drop_count: 0

tunables:

peer_timeout: 0

peer_credits: 0

peer_buffer_credits: 0

credits: 0

lnd tunables:

peercredits_hiw: 64

map_on_demand: 32

concurrent_sends: 256

fmr_pool_size: 2048

fmr_flush_trigger: 512

fmr_cache: 1

tcp bonding: 0

dev cpt: 0

CPT: "[0,0,0,0]"

- net type: o2ib1

local NI(s):

- nid: 192.168.5.151@o2ib1

status: up

interfaces:

0: ib1

statistics:

send_count: 0

recv_count: 0

drop_count: 0

tunables:

peer_timeout: 180

peer_credits: 8

peer_buffer_credits: 0

credits: 256

lnd tunables:

peercredits_hiw: 64

map_on_demand: 32

concurrent_sends: 256

fmr_pool_size: 2048

fmr_flush_trigger: 512

fmr_cache: 1

tcp bonding: 0

dev cpt: 0

CPT: "[0,0,0,0]"

#lnetctl route show --verbose

route:

- net: o2ib

gateway: 192.168.5.152@o2ib1

hop: 1

priority: 0

state: up

To make the above configuration permanent, run the following commands:

#lnetctl export > /etc/sysconfig/lnet.conf

#echo ‘‘o2ib: { gateway: 192.168.5.152@o2ib1 }’’ > /etc/sysconfig/lnet_routes.conf

#systemctl enable lnet

Configure LNet Routers

Make sure that the /etc/modprobe.d/koblnd.conf file is updated with the following settings. The script /usr/sbin/ko2iblnd-probe detects the OPA card and only if it's present use options ko2iblnd-opa, Otherwise default settings for MLX cards is used.

alias ko2iblnd-opa ko2iblnd options ko2iblnd-opa peer_credits=128 peer_credits_hiw=64 credits=1024 concurrent_sends=256 ntx=2048 map_on_demand=32 fmr_pool_size=2048 fmr_flush_trigger=512 fmr_cache=1 conns_per_peer=4 install ko2iblnd /usr/sbin/ko2iblnd-probe

Run the following commands to configure LNet router per the topology in the figure above:

#modprobe lnet

#lnetctl lnet configure

#lnetctl net add --net o2ib1 --if ib1

#lnetctl net add --net o2ib --if ib0

#lnetctl set routing 1

#lnetctl net show --verbose

net:

- net: lo

local NI(s):

- nid: 0@lo

status: up

statistics:

send_count: 0

recv_count: 0

drop_count: 0

tunables:

peer_timeout: 0

peer_credits: 0

peer_buffer_credits: 0

credits: 0

lnd tunables:

peercredits_hiw: 64

map_on_demand: 32

concurrent_sends: 256

fmr_pool_size: 2048

fmr_flush_trigger: 512

fmr_cache: 1

conns_per_peer: 4

tcp bonding: 0

dev cpt: 0

CPT: "[0,0,0,0]"

- net: o2ib

local NI(s):

- nid: 192.168.3.104@o2ib

status: up

interfaces:

0: ib0

statistics:

send_count: 0

recv_count: 0

drop_count: 0

tunables:

peer_timeout: 180

peer_credits: 8

peer_buffer_credits: 0

credits: 256

lnd tunables:

peercredits_hiw: 64

map_on_demand: 32

concurrent_sends: 256

fmr_pool_size: 2048

fmr_flush_trigger: 512

fmr_cache: 1

conns_per_peer: 4

tcp bonding: 0

dev cpt: 0

CPT: "[0,0,0,0]"

- net type: o2ib1

local NI(s):

- nid: 192.168.5.152@o2ib1

status: up

interfaces:

0: ib1

statistics:

send_count: 0

recv_count: 0

drop_count: 0

tunables:

peer_timeout: 180

peer_credits: 8

peer_buffer_credits: 0

credits: 256

lnd tunables:

peercredits_hiw: 64

map_on_demand: 32

concurrent_sends: 256

fmr_pool_size: 2048

fmr_flush_trigger: 512

fmr_cache: 1

conns_per_peer: 4

tcp bonding: 0

dev cpt: 0

CPT: "[0,0,0,0]"

The parameter settings from /etc/modprobe.d/ko2iblnd.conf are global and applied to all the driver cards in the node. If the above tunables are used for both OPA and MLX, MLX will experience a 5-10% drop in performance. Therefore if desired, the settings for MLX cards can be changed using Dynamic LNet Configuration (DLC). With Lustre 2.10.X release, multiple NIs can be configured with their unique tunables. To do this, the tunables for MLX card can be edited in the lnet.conf like below:

- net type: o2ib

local NI(s):

- nid: 192.168.3.104@o2ib

status: up

interfaces:

0: ib0

tunables:

peer_timeout: 180

peer_credits: 8

peer_buffer_credits: 0

credits: 256

lnd tunables:

peercredits_hiw: 4

map_on_demand: 0

concurrent_sends: 8

fmr_pool_size: 512

fmr_flush_trigger: 384

fmr_cache: 1

conns_per_peer: 1

tcp bonding: 0

dev cpt: 0

CPT: "[0,0,0,0]"

To enable the configuration at startup:

#systemctl enable lnet

Configure Lustre Servers

To configure Lustre server (Infiniband based), run the following commands:

#modprobe lnet

#lnetctl lnet configure

#lnetctl net add --net o2ib --if ib0

#lnetctl route add --net o2ib1 --gateway 192.168.3.104@o2ib

#lnetctl net show --verbose

net:

- net: lo

local NI(s):

- nid: 0@lo

status: up

statistics:

send_count: 0

recv_count: 0

drop_count: 0

tunables:

peer_timeout: 0

peer_credits: 0

peer_buffer_credits: 0

credits: 0

lnd tunables:

peercredits_hiw: 4

map_on_demand: 0

concurrent_sends: 8

fmr_pool_size: 512

fmr_flush_trigger: 384

fmr_cache: 1

conns_per_peer: 1

tcp bonding: 0

dev cpt: 0

CPT: "[0,0,0,0]"

- net: o2ib

local NI(s):

- nid: 192.168.3.106@o2ib

status: up

interfaces:

0: ib0

statistics:

send_count: 0

recv_count: 0

drop_count: 0

tunables:

peer_timeout: 180

peer_credits: 8

peer_buffer_credits: 0

credits: 256

lnd tunables:

peercredits_hiw: 4

map_on_demand: 0

concurrent_sends: 8

fmr_pool_size: 512

fmr_flush_trigger: 384

fmr_cache: 1

conns_per_peer: 1

tcp bonding: 0

dev cpt: 0

CPT: "[0,0,0,0]"

#lnetctl route show --verbose

route:

- net: o2ib1

gateway: 192.168.3.104@o2ib

hop: 1

priority: 0

state: up