Imperative Recovery: Difference between revisions

(Initial import from https://wiki.hpdd.intel.com/display/PUB/Imperative+Recovery) |

(→High Level Design: add link to Imperative Recovery hlD) |

||

| Line 11: | Line 11: | ||

=High Level Design= | =High Level Design= | ||

The High Level Design is described in [[Imperative Recovery | The High Level Design is described in the [[media:Imperative_Recovery_Design.pdf|Imperative Recovery Design]]. | ||

=Testing= | =Testing= | ||

Revision as of 02:08, 28 February 2020

Introduction

If a Lustre server fails, the recovery mechanism on the client will first detect this when an RPC is not replied within the expected processing time. At this point, the client will first try to resend the RPC to the server, but if that fails it will enter recovery, and try reconnecting to all of the servers that are configured for that target (OST or MDT). To avoid flooding the network with reconnect requests, the client reconnection RPCs will only be sent periodically.

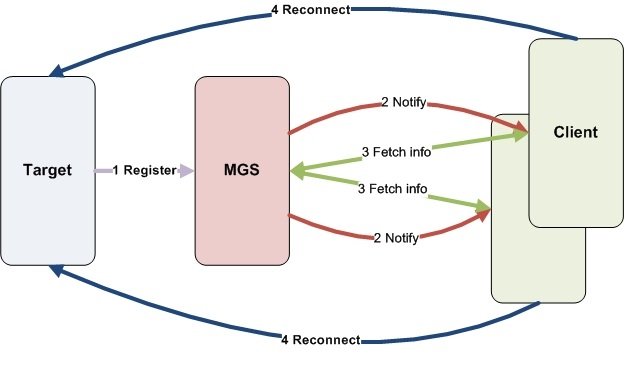

Imperative recovery reduces the time for clients to detect that targets have restarted after failure, so that they can do reconnection as soon as possible. The MGS is used as a reflector and it will notify clients when a target is newly registered.

This picture demonstrates the rough idea of the communication process for Imperative Recovery:

High Level Design

The High Level Design is described in the Imperative Recovery Design.

Testing

Scalability is very important for imperative recovery because we need the capability to support at least 100K clients. Based on this situation, instead of regular unit and regression test, we also have a scalability test document to verify it works. Please check out the Imperative Recovery Test Plan.

Presentation

There is a presentation about imperative recovery at LUG 2011, please take a look at the presentation and the video.

Results

In our simulation test on Hyperion, a large test cluster at LLNL with 125 client nodes and 600 mountpoints on each client, we killed an OSS and investigated OST recovery time. It could finish recovery in around 60 seconds. As a comparison, it took about 300 seconds without imperative recovery.