Introduction to Lustre

Overview

Lustre* is an open-source, global single-namespace, POSIX-compliant, distributed parallel file system designed for scalability, high-performance, and high-availability. Lustre runs on Linux-based operating systems and employs a client-server network architecture. Storage is provided by a set of servers that can scale to populations measuring up to several hundred hosts. Lustre servers for a single file system instance can, in aggregate, present up to tens of petabytes of storage to thousands of compute clients, with more than a terabyte-per-second of combined throughput.

Lustre is a file system that scales to meet the requirements of applications running on a range of systems from small-scale HPC environments up to the very largest supercomputers and has been created using object-based storage building blocks to maximize scalability.

Redundant servers support storage fail-over, while metadata and data are stored on separate servers, allowing each file system to be optimized for different workloads. Lustre can deliver fast IO to applications across high-speed network fabrics, such as Intel® Omni-Path Architecture (OPA), InfiniBand* and Ethernet.

Lustre Architecture

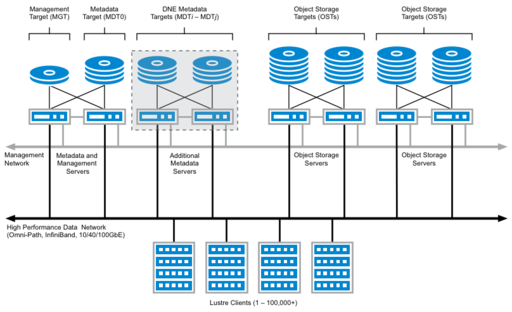

The Lustre file system architecture is designed as a scalable storage platform for computer networks and is based on distributed, object-based storage. The namespace hierarchy is stored separately from file content. Services in Lustre are separated into those supporting metadata operations, and those supporting file content operations.

There are two object types in Lustre. Data objects are simple arrays used to store the bulk data associated with a file’s content, while index objects are used to store key-value information, such as POSIX directories. Block storage management is delegated to back-end storage servers and all application-level file system access is transacted on a network fabric between clients and the storage servers.

The building blocks of a Lustre file system are the Metadata Servers and Object Storage Servers, which provide namespace operations and bulk IO services respectively. There is also the Management Server, which is a global registry for configuration information that is functionally independent of any single Lustre instance. Lustre clients provide the interface between applications and the Lustre services. It is the client software that presents a coherent, POSIX interface to end-user applications. Lustre’s network protocol, LNet, provides the communications framework that binds the services together.

Metadata Server (MDS): The MDS manages all name space operations for a Lustre file system. A file system’s directory hierarchy and file information are contained on storage devices referred to as Metadata Targets (MDT), and the MDS provides the logical interface to this storage. A Lustre file system will always have at least one MDS and corresponding MDT, and more can be added to meet the scaling requirements of a particular environment. The MDS controls the allocation of storage objects on the Object Storage Servers for the file content when a file is created, and manages the opening and closing of files, file deletions and renames, and other namespace operations.

An MDT stores namespace metadata, such as filenames, directories, access permissions, and file layout, effectively providing the index for the data held on the file system. The ability to have multiple MDTs in a single file system allows directory subtrees to reside on the secondary MDTs, which is useful for isolating workloads that are especially metadata-intensive onto dedicated hardware (one could allocate an MDT for a specific set of projects, for example). Large, single directories can be distributed across multiple MDTs as well, providing scalability for applications that generate large numbers of files in a flat directory hierarchy.

Object Storage Server (OSS): OSSs provide bulk storage for the contents of files in a Lustre file system. One or more object storage servers (OSS) store file data on one or more object storage targets (OST), and a single Lustre file system can scale to hundreds of OSSs. A single OSS typically serves between two and eight OSTs (although more are possible), with the OSTs stored on direct-attached storage. The capacity of a Lustre file system is the sum of the capacities provided by the OSTs across all of the OSS hosts.

OSSs are usually configured in pairs, with each pair connected to a shared external storage enclosure that stores the OSTs. The OSTs in the enclosure are accessible via the two servers in an active-passive high availability failover configuration, to provide service continuity in the event of a server or component failure. The OSTs are mounted on only one server at a time, and are usually evenly distributed across the OSS hosts to balance performance and maximize throughput.

Management Server (MGS): The MGS stores configuration information for all the Lustre file systems in a cluster and provides this information to other Lustre hosts. Servers and clients connect to the MGS on startup in order to retrieve the configuration log for the file system. Notification of changes to a file system’s configuration, including server restarts, are distributed by the MGS.

Persistent configuration information for all Lustre nodes is recorded by the MGS onto a storage device called a Management Target (MGT). The MGS can be paired with an MDS in a high-availability configuration, with each server connected to shared storage. Multiple Lustre file systems can be managed by a single MGS.

Clients: Applications access and use file system data by interfacing with Lustre clients. A Lustre client is represented as a file system mount point on a host and presents applications with a unified namespace for all of the files and data in the file system, using standard POSIX semantics. A Lustre file system mounted on the client operating system looks much like any other POSIX file system; each Lustre instance is presented as a separate mount point on the client’s operating system, and each client can mount several different Lustre file system instances concurrently.

Lustre Networking (LNet): LNet is the high-speed data network protocol that clients use to access the file system. LNet is designed to meet the needs of large-scale compute clusters and is optimized for very large node counts and high throughput. LNet supports Ethernet, InfiniBand*, Intel® Omni-Path Architecture (OPA), and specific compute fabrics such as as Cray* Gemini.

LNet abstracts network details from the file system itself. LNet allows for full RDMA throughput and zero copy communications when available.

LNet supports routing, which provides maximum flexibility for connecting different network topologies. LNet routing provides an efficient protocol for bridging different networks, or employing different fabric technologies, such as Intel® OPA and InfiniBand. In larger file systems, with very large client counts, LNet can also support multihoming to improve performance and reliability. For more details and guidance, see the document Configuring LNet Routers for File Systems based on Intel* EE for Lustre* Software.

Lustre Scalability

| Value using LDISKFS as backend | Value using ZFS as backend | Notes | |

|---|---|---|---|

| Maximum stripe count | 2000 | 2000 | Limit is 160 for ldiskfs if "ea_inode" feature is not enabled on MDT |

| Maximum stripe size | < 4GB | < 4GB | |

| Minimum stripe size | 64KB | 64KB | |

| Maximum object size | 16TB | 256TB | |

| Maximum file size | 31.25PB | 512PB* | |

| Maximum filesystem size | 512PB | 8EB* | |

| Maximum number of files or subdirectories in a single directory | 10M for 48-byte filenames. 5M for 128-byte filenames. |

248 | |

| Maximum number of files in the file system | 4 billion per MDT | 256 trillion per MDT | |

| Maximum filename length | 255 bytes | 255 bytes | |

| Maximum pathname length | 4096 bytes | 4096 bytes | Limited by Linux VFS |

* Theoretical value.

High Availability and Data Storage Reliability

Lustre Storage

Organizations must be able to trust in the reliability and availability of their IT infrastructure resources if they are to be successful in the realization of their objectives. For storage systems, this means that users must have confidence that their data is stored persistently and reliably, without loss or corruption of information, and that the data, once stored, is available for recall on demand to an application.

Lustre is designed to keep up with the demands of the most data-intensive workloads from applications running on the very largest supercomputers in the world. Any overheads that are introduced into these environments reduces the usable bandwidth for applications and reduces overall efficiency, which in turn increases the time it takes to arrive at a result. Therefore, the Lustre file system architecture does not implement a redundant storage pattern for data objects across storage servers, due to the inherent latency and bandwidth overheads that replication and other data redundancy mechanisms introduce.

Data reliability is implemented in the storage subsystem, where it can be isolated in large part from user application-level I/O and communications. These storage systems typically comprise multi-ported enclosures, each containing an array of disks or other persistent storage devices. Arrays may be intelligent data storage systems with dedicated controllers or simple trays with no dedicated control software (usually referred to as JBODs, “Just a Bunch of Disks”).

Intelligent storage arrays have the benefit of abstracting the complexity of managing storage redundancy through RAID configuration, and offloading the computational overheads for checksum and parity calculations from the host server. Dedicated storage controllers are typically configured with battery-backed cache for buffering data, thereby further isolating from the storage services running on the host computer the IO overhead associated with writing additional data blocks (e.g. in a RAID 6 or RAID 10 device layout).

JBOD enclosures are simpler and are less expensive than intelligent storage arrays (often referred to as “hardware RAID arrays”). The low cost of acquisition is offset by more complex software configuration in the host server’s operating system, as all data management tasks, including redundant layout configuration, must be performed and monitored by the host. The Linux kernel’s standard tools for storage volume management, MDRAID and LVM, provide the basic tools for managing JBOD storage and allow for complex fault tolerant disk layouts to be defined. After the block layout is defined, a file system can be formatted on top of the software volume.

LVM and MDRAID, while prevalent, are somewhat complex and can be difficult to manage efficiently on large-scale storage platforms. However in recent years, the JBOD architecture has received a significant boost in popularity thanks to the development of advanced file system technology, as exemplified by OpenZFS, which makes storage management easier while at the same time improving reliability and data integrity. OpenZFS has disrupted many of the assumptions regarding storage management and “software RAID” since its original introduction in the Solaris operating system by Sun Microsystems (now Oracle Solaris).

The OpenZFS file system reduces the administrative complexity of maintaining software-based storage by taking a holistic view of both the file system and storage management. ZFS integrates volume management features with an advanced file system that scales efficiently and provides enhancements including end-to-end checksums for protection against data corruption, versatility in storage configuration, online data integrity verification, and a copy-on-write architecture that eliminates the need to perform offline repairs. There is no fsck in ZFS.

Advances in software-based storage architectures are also influencing storage hardware design, creating hybrid server and storage enclosures that combine storage trays with standard servers into a single high-density chassis. These integrated systems can offer higher density per rack and less complex physical integration (including reduced cabling).

High Availability for Lustre Service Continuity

Lustre servers are responsible for transacting I/O requests from applications running on a network of computers, and for managing the block storage used to maintain a persistent record of the data. Lustre clients do not have direct connections to the block storage and are often completely diskless, with no local data persistence. Because data is not replicated between Lustre servers, loss of access to a server means loss of access to the data managed by that server, which in turn means that a subset of the data managed by the Lustre file system will not be available to clients.

To protect against service failure, Lustre data is usually held on multi-ported, dedicated storage to which two or more servers are connected. The storage is subdivided into volumes or LUNs, with each LUN representing a Lustre storage target (MGT, MDT or OST). Each server that is attached to the enclosure has equal access to the storage targets and can be configured to present the storage targets to the network, although only one server is permitted to access an individual storage target in the enclosure at any given time. Lustre uses an inter-node failover model for maintaining service availability, meaning that if a server develops a fault, then any Lustre storage target managed by the failed server can be transferred to a surviving server that is connected to the same storage array.

This configuration is usually referred to as a high-availability cluster. A single Lustre file system installation will be comprised of several such HA clusters, each providing a discrete set of services that is a subset of the whole file system. These discrete HA clusters are the building blocks for a high-availability, Lustre parallel distributed file system that can scale to tens of petabytes in capacity and to more than one terabyte-per-second in aggregate throughput performance.

Building block patterns can vary, which is a reflection the flexibility that Lustre affords integrators and administrators when designing their high performance storage infrastructure. The most common blueprint employs two servers joined to shared storage in an HA clustered pair topology. While HA clusters can vary in the number of servers, a two-node configuration provides the greatest overall flexibility as it represents the smallest storage building block that also provides high availability. Each building block has a well-defined capacity and measured throughput, so Lustre file systems can be designed in terms of the number of building blocks that are required to meet capacity and performance objectives.

An alternative to the two-node HA building block, described in Scalable high availability for Lustre with Pacemaker (video), was presented at LUG 2017 by Christopher Morrone. This is a very interesting design and merits consideration.

A single Lustre file system can scale linearly based on the number of building blocks. The minimum HA configuration for Lustre is a metadata and management building block that provides the MDS and MGS services, plus a single object storage building block for the OSS services. Using these basic units, one can create file systems with hundreds of OSSs as well as several MDSs, using HA building blocks to provide a reliable, high-performance platform.

Figure 2 shows a blue-print for typical high-availability Lustre server building blocks, one for the metadata and management services, and one for object storage.

Lustre doesn’t need to be configured for high availability – a Lustre file system will operate perfectly well without HA protection, but be aware that a fault in the server infrastructure will cause a service outage for the file system and data from the failed server component will be unavailable unless and until the component is restored. Designing Lustre for high availability is therefore recommended, and is the norm for the overwhelming majority of Lustre file system installations.

High Availability and GNU/Linux

Every major enterprise operating system offers a high-availability cluster software framework that follows this basic model. In current GNU/Linux distributions, the de facto HA cluster framework has consolidated around two software packages: Corosync for cluster membership and communications, and Pacemaker for resource management.

Operating system distributions have each developed their own administration tools around these applications. For example, Red Hat Enterprise Linux (RHEL) makes use of PCS (Pacemaker/Corosync Configuration System), while SuSE Linux Enterprise Server (SLES) has CRMSH (Cluster Resource Management Shell). Both PCS and CRMSH are open-source applications. There are also a number of other tools available, including a web-based application HAWK, which interfaces to CRMSH. PCS has its own web-based UI.

While this diversity of tools has the effect of limiting portability of the specific procedures for creating and maintaining clusters, the underlying technology remains the same.