ZFS OSD Hardware Considerations

CPU

OpenZFS is a software-based storage platform and so uses CPU cycles from the host server in order to calculate parity for RAID-Z protection. The double parity implementation in OpenZFS (RAID-Z2) recommended for object storage targets (OST) uses an algorithm similar to RAID-6, but is implemented in software and not in a RAID card or a separate storage controller.

OpenZFS uses a copy-on-write transactional object model that makes extensive use of 256-bit checksums for all data blocks, using hash algorithms like Fletcher-4 and SHA-256. This makes the choice of CPU an important consideration when designing servers that use ZFS storage.

Metadata server workloads are IOps-centric, characterized by small transactions that run at very high rates and benefit from frequency-optimized CPUs. Metadata servers typically have 2 CPU sockets, with 6 or more cores per socket. 20M of CPU cache and 3+ GHz clock rate are recommended for best performance.

Object storage server workloads are throughput-centric, often with long-running, streaming transactions. Because the workloads are oriented more toward streaming IO, object storage servers are less sensitive to CPU frequency than metadata servers, but do benefit from having a relatively large number of cores and a large cache. ZFS compression is recommended for OSS nodes because it can improve throughput performance as well as optimizing storage efficiency. ZFS compression, when used, does add additional CPU overhead. Object storage servers typically have 2 CPU sockets, with 10 or more cores per socket. 30M of CPU cache and 2.4+ GHz clock rate are recommended for best performance.

The Intel Xeon E5 26xx processor family provides a range of options suitable for supporting Lustre server workloads across different power/performance/price points.

Memory and Hierarchical Caches

OpenZFS uses RAM extensively as the first level of cache for frequently accessed data. Ideally, all data or metadata should be stored in RAM, but that is not practical when designing file systems because it would be prohibitively expensive. OpenZFS automatically caches data in a hierarchy to optimize performance vs cost. The most frequently accessed data is cached in RAM. To deliver strong file system performance for random, often-accessed reads, OpenZFS can also use an additional layer of read cache, positioning data from slow hard drives onto SSDs or RAM.

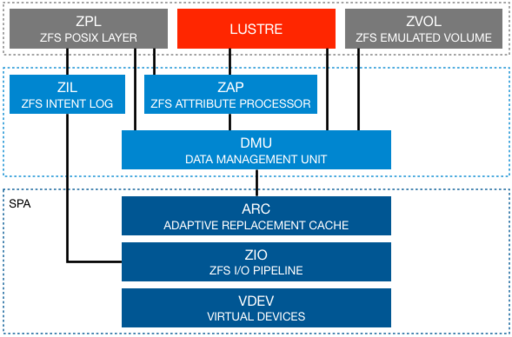

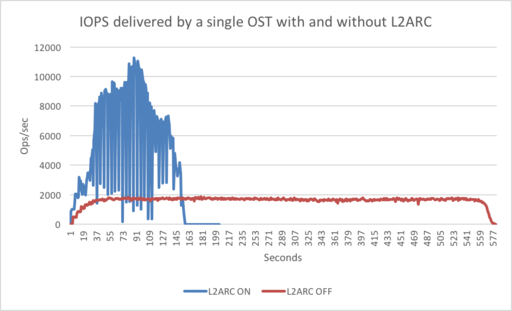

In OpenZFS, the first level of cache, called the Adaptive Replacement Cache (ARC), can be accessed in microseconds (main memory DRAM). If an ARC read is missed, OpenZFS then reads from disk, with millisecond latency. A second level of cache (L2ARC) using fast storage, for example NVMe devices, sits in-between, complementing and supporting the main memory ARC. The L2ARC holds non-dirty ZFS data and is intended to improve the performance of random read workloads (Figure 1). If the L2ARC device fails, all reads will use the regular disk storage without any data loss.

Reading 3.84M files, each one 64K, from 16 clients in parallel. Lustre is configured using ZFS on 4 OSSs. On each OSS, Intel configured 1 OST using 16 HDDs and 1 Intel DCP 3700 NVMe as the L2ARC device. The chart displays the operations per-second delivered by a single OST with and without L2ARC.

By default, up to 50% of the available RAM will be used for the ARC, and this can be tuned as required. Sites have seen good success with as much as 75% of the available RAM allocated to ARC.

Note: The ZFS Intent Log (ZIL) is not currently used by Lustre. The ZIL provides POSIX compliance for synchronous write transactions. This is typically used by applications such as databases to ensure that data is on durable storage when a system call returns. Asynchronous IO, and any writes larger than 64KB (by default) do not write to the ZIL. As can be seen in Figure 2, Lustre bypasses the ZFS POSIX Layer and therefore also the ZIL.

As with any application, OpenZFS relies on the integrity of system DRAM to ensure that data is protected from corruption while it is held in memory. Standard DRAM modules do not have the features necessary to prevent data from being corrupted. For this reason, it is essential to use ECC RAM when designing a ZFS-based storage server. ECC RAM is able to detect and correct errors in memory locations, protecting contents.

If data held in memory is corrupted and this goes undetected, then the corrupted data will be propagated to the storage pool. Access to data in the pool is then lost. Data protection mechanisms in this scenario fail, because there is no way for the ZFS software to know that data held in memory has been altered. There is no option for recovery if you do not have a backup strategy.

OpenZFS is able to protect the storage subsystem from corrupt data and ECC memory covers the risk of corrupt memory.

Storage

OpenZFS is a combination of both a file system and a storage volume manager. By incorporating the features of a storage array controller, OpenZFS enables the use of JBOD (Just a Bunch of Disks) storage enclosures while also providing a flexible, powerful storage platform with strong data integrity protection features. The OpenZFS volume manager supports single-, double- and triple-parity RAID layouts, as well as mirroring and stripes.

A JBOD configuration not only decreases the TCO of the storage solution but can also (in some cases) increase the performance of the solution. In fact, the bandwidth provided from some storage controllers is limited by the overhead of maintaining cache coherency between two redundant controllers.

Using OpenZFS does add complexity at the operating system level. On each Lustre server, there is visibility of each HDD installed into the external JBOD and a proper device mapper multipath configuration is required in order to provide a redundant path to each HDD.

Using a multi-ported JBOD with 90-HDD means that a Linux Lustre server has:

- 180 Linux SCSI device paths to manage

- 90 Linux DM devices to create in the multipath configuration

The configuration of the device multi-mapper service is quite complex and can affect the performance characteristics of the solution. In some cases, JBODs can exhibit bad behavior from using load-balanced IO balancing, when in fact all the requests for a disk are expected to arrive from a single interface. For this reason, when working with JBODS it is recommended to use the path_grouping_policy that enables failover-only capability.

Refer to the storage vendor’s documentation for configuration advice because guidance can differ between hardware manufacturers. Linux operating system distribution vendors such as Red Hat also maintain documentation on multipath configuration.

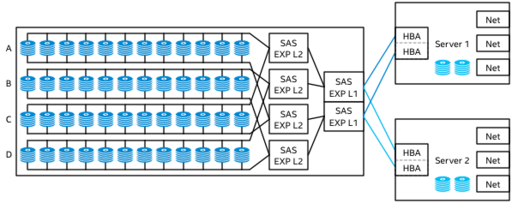

Even if a JBOD is a “simple” bunch of disks, due the increased density, a 90-HDD JBOD, for example, can be designed with several levels of SAS expanders that provide connectivity from the dual port HDDs to the SAS host bus adapter (HBA), to the Lustre server (Figure 3). When organizing the ZFS pool, it is essential to know this topology in order to avoid any overlapping or bottlenecks in the stream data flow during the commissioning of a server. In the ideal example (Figure 3), it is suggested to create four pools, each using one group (A, B, C, D) of HDDs.

In terms of ZFS pool creation, it is necessary to distinguish between metadata targets (MDTs) and object storage targets (OSTs).

For the MDTs, a configuration that can sustain a higher number of IOPS is recommended. An example of such a configuration could be 4+ SSDs or 12+ high speed HDDs with striped mirror protection.

To define the size of the MDT, consider that for ZFS, there is no fixed ratio between the number of data structures that represent objects (dnodes) and storage space at format time. The new dnodes just consume space like the file data, and new objects can be created until the MDT runs out of space. There will be at least 4.5KB of space used for each file (assuming 4KB sector size). That does not include any overhead for directories and other metadata on the MDTs, although ZFS’s metadata compression (which is enabled by default for ZFS) may reduce the actual space used by each dnode.

For the OSTs, setting the recordsize property equal to 1MB is recommended. Setting the property ashift=12 can also deliver a performance improvement. The recommended OST drive layout configuration consists of a double-parity RAIDZ2 using at least 11 disks (9+2). Refer to the section titled ZFS OSDs for information on RAIDZ layouts and the application of the ashift and recordsize properties.

If the design will take advantage of L2ARC devices, consider that an L2ARC device can only be associated with a single pool.

Creating multiple pools in the object storage servers means creating multiple OSTs, one per pool. For a very large Lustre installation, consider creating one pool per OSS (or a limited number), concatenating many VDEVs of 11 HDDs in RAID-Z2.

This strategy could have several benefits:

- Better utilization of the ARC memory

- Fewer L2ARC are needed to speed up random reads

- A reduction in the number of object storage clients and the memory consumption associated to those on the client side

Suggested ZFS pool layout based on the different Lustre target roles:

| Target | Suggested | Notes |

|---|---|---|

| MGT | MIRROR: 2 HDDs | No requirement for high performance or capacity |

| MDT | STRIPED MIRROR: 4+ SSDs or 12+ HDDs | Optimize for high IOPS workload. |

| OST | RAID-Z2: 11+ HDDs | Optimize for high throughput workload. |

Advanced Format Disks

Advanced Format Disks use 4096 rather than 512 byte sectors, and as such require specification of additional parameters to ensure sub-sector writes aren't performed, which can harm performance. Please reference the ZFS FAQ on Advanced Format Disks for further information and appropriate parameters to use when creating and adding zpools.